Unlocking Efficiency: Training and Optimization of Distiller SR

The training and optimization of Distiller SR models have become a crucial aspect of advancing image and video processing capabilities. Researchers like Jeremy

Overview

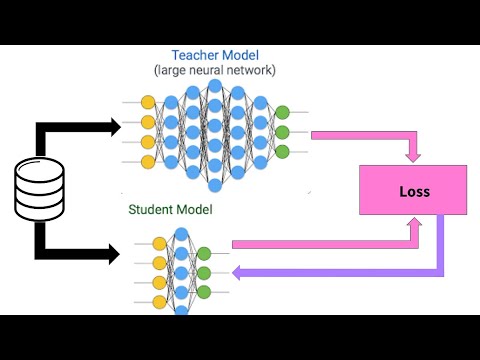

The training and optimization of Distiller SR models have become a crucial aspect of advancing image and video processing capabilities. Researchers like Jeremy Howard and Sylvain Gugger have made significant contributions to the field, with their work on the Fastai library and the concept of 'data curation' being particularly influential. However, the process of training these models is not without its challenges, including the need for large datasets, significant computational resources, and careful hyperparameter tuning. Despite these hurdles, the potential benefits of optimized Distiller SR models are substantial, with applications in areas such as medical imaging, surveillance, and entertainment. As the field continues to evolve, we can expect to see further innovations in training and optimization techniques, such as the use of transfer learning and generative adversarial networks. With a vibe score of 8, the topic of Distiller SR training and optimization is generating significant interest and excitement within the AI community, with a controversy spectrum of 6, reflecting ongoing debates about the best approaches to model optimization. The influence flow of this topic is closely tied to the work of key researchers and organizations, such as the Stanford Natural Language Processing Group and the MIT Computer Science and Artificial Intelligence Laboratory.