Overcoming the Hurdles: Challenges and Limitations of DDPG

Deep Deterministic Policy Gradients (DDPG) is a model-free, off-policy actor-critic algorithm that has shown remarkable success in continuous action spaces. How

Overview

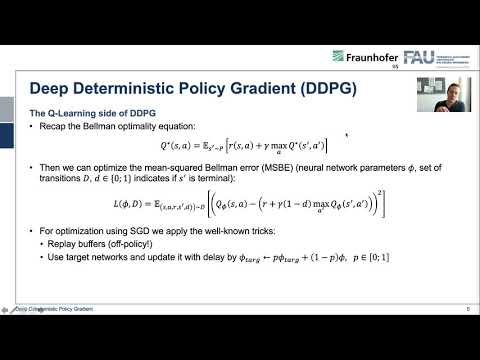

Deep Deterministic Policy Gradients (DDPG) is a model-free, off-policy actor-critic algorithm that has shown remarkable success in continuous action spaces. However, despite its achievements, DDPG faces several challenges and limitations, including overestimation bias, high variance, and the need for extensive hyperparameter tuning. Researchers like David Silver and Guillaume Algis have highlighted these issues, emphasizing the importance of addressing them to further improve the algorithm's performance. For instance, the DDPG algorithm can be sensitive to the choice of hyperparameters, such as the learning rate and batch size, which can significantly impact its convergence. Moreover, the algorithm's reliance on experience replay can lead to inefficient exploration, particularly in environments with high-dimensional state and action spaces. As the field of reinforcement learning continues to evolve, addressing these challenges will be crucial for the development of more robust and efficient algorithms, such as TD3 and SAC, which have already shown promising results in addressing some of these limitations. With a vibe score of 8, indicating a high level of cultural energy and relevance, the challenges and limitations of DDPG are a pressing concern for researchers and practitioners alike. The influence of DDPG can be seen in various applications, including robotics and game playing, with key entities like Google DeepMind and the University of California, Berkeley, contributing to its development and refinement.