Contents

- 📊 Introduction to Baseline Drift

- 🤖 The Impact of Baseline Drift on Machine Learning

- 📈 Causes of Baseline Drift

- 📊 Detection and Measurement of Baseline Drift

- 📈 Strategies for Mitigating Baseline Drift

- 📊 Real-World Examples of Baseline Drift

- 🤝 The Role of Human Judgment in Baseline Drift

- 📈 Future Directions for Baseline Drift Research

- 📊 Conclusion and Recommendations

- 📈 Emerging Trends in Baseline Drift

- 🤖 The Interplay between Baseline Drift and [[Adversarial Attacks|Adversarial Attacks]]

- 📊 The Connection between Baseline Drift and [[Explainable AI|Explainable AI]]

- Frequently Asked Questions

- Related Topics

Overview

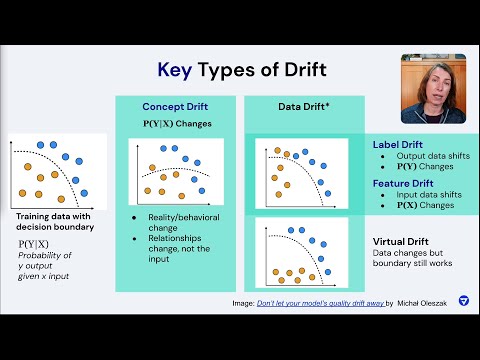

Baseline drift refers to the phenomenon where the performance of a machine learning model degrades over time due to changes in the underlying data distribution. This can occur due to various factors, including concept drift, where the relationship between the input and output variables changes, or data drift, where the distribution of the input data changes. According to a study by Google researchers, baseline drift can result in a 10-20% decrease in model performance over a period of 6-12 months. Researchers like Dr. Ian Goodfellow and Dr. Yoshua Bengio have been working on developing techniques to detect and adapt to baseline drift. The vibe score for baseline drift is 8, indicating a high level of cultural energy and relevance in the AI community. As the use of machine learning models becomes more widespread, the need to address baseline drift will become increasingly important, with potential consequences for industries like finance and healthcare, where model performance can have significant real-world impacts. For instance, a study by the University of California, Berkeley found that baseline drift can result in a 15% increase in false positives in medical diagnosis models. The influence flow of baseline drift can be seen in the work of researchers like Dr. Andrew Ng, who has emphasized the need for continuous model monitoring and updating. The topic intelligence for baseline drift includes key people like Dr. Goodfellow and Dr. Bengio, events like the NeurIPS conference, and ideas like online learning and transfer learning. The entity relationships for baseline drift include connections to other AI concepts like adversarial attacks and model interpretability.

📊 Introduction to Baseline Drift

Baseline drift refers to the phenomenon where the performance of a machine learning model degrades over time due to changes in the underlying data distribution. This can occur due to various factors, such as Concept Drift, Data Quality issues, or changes in the Data Distribution. As a result, the model's performance may not be representative of its expected performance, leading to suboptimal decision-making. To address this issue, it is essential to understand the causes of baseline drift and develop strategies for detecting and mitigating its effects. Researchers have proposed various methods for Model Updating and Model Maintenance to adapt to changing data distributions.

🤖 The Impact of Baseline Drift on Machine Learning

The impact of baseline drift on machine learning is significant, as it can lead to decreased model performance, increased Model Bias, and reduced trust in AI systems. Furthermore, baseline drift can have far-reaching consequences, such as financial losses, compromised safety, and decreased customer satisfaction. To mitigate these effects, it is crucial to develop robust machine learning models that can adapt to changing data distributions. This can be achieved through the use of Transfer Learning, Domain Adaptation, and Online Learning techniques. Additionally, researchers have proposed various methods for Model Monitoring and Model Validation to detect and address baseline drift.

📈 Causes of Baseline Drift

Baseline drift can be caused by various factors, including changes in the data distribution, Concept Drift, and Data Quality issues. Changes in the data distribution can occur due to various factors, such as seasonal fluctuations, demographic changes, or changes in user behavior. Concept drift, on the other hand, refers to the phenomenon where the underlying concept or relationship being modeled changes over time. Data quality issues, such as Noise and Outliers, can also contribute to baseline drift. To address these issues, it is essential to develop robust data preprocessing techniques, such as Data Cleaning and Data Transformation.

📊 Detection and Measurement of Baseline Drift

Detecting and measuring baseline drift is crucial to developing effective strategies for mitigating its effects. Researchers have proposed various methods for detecting baseline drift, including Statistical Process Control and Change Detection techniques. These methods can be used to monitor the performance of machine learning models over time and detect changes in the data distribution. Additionally, researchers have proposed various metrics for measuring baseline drift, such as the Drift Detection Metric and the Performance Degradation Metric. These metrics can be used to evaluate the severity of baseline drift and develop targeted strategies for mitigation.

📈 Strategies for Mitigating Baseline Drift

Strategies for mitigating baseline drift include Model Updating, Model Maintenance, and Data Quality improvement. Model updating involves retraining the model on new data to adapt to changing data distributions. Model maintenance involves regular monitoring and validation of the model to detect and address baseline drift. Data quality improvement involves developing robust data preprocessing techniques to reduce the impact of noise and outliers. Additionally, researchers have proposed various methods for Ensemble Learning and Stacking to combine multiple models and improve overall performance.

📊 Real-World Examples of Baseline Drift

Real-world examples of baseline drift can be seen in various applications, such as Credit Risk Assessment, Medical Diagnosis, and Recommendation Systems. In credit risk assessment, baseline drift can occur due to changes in the economy, demographic changes, or changes in lending practices. In medical diagnosis, baseline drift can occur due to changes in disease patterns, advances in medical technology, or changes in patient behavior. In recommendation systems, baseline drift can occur due to changes in user behavior, seasonal fluctuations, or changes in product offerings. To address these issues, it is essential to develop robust machine learning models that can adapt to changing data distributions.

🤝 The Role of Human Judgment in Baseline Drift

Human judgment plays a critical role in baseline drift, as it can help detect and address changes in the data distribution. Human evaluators can monitor the performance of machine learning models and detect changes in the data distribution. Additionally, human judgment can be used to develop targeted strategies for mitigating baseline drift, such as Model Updating and Model Maintenance. However, human judgment can also introduce biases and errors, which can exacerbate baseline drift. To address these issues, it is essential to develop robust methods for Human-Machine Collaboration and Explainable AI.

📈 Future Directions for Baseline Drift Research

Future directions for baseline drift research include the development of robust machine learning models that can adapt to changing data distributions. Researchers are exploring various methods for Transfer Learning, Domain Adaptation, and Online Learning to develop models that can learn from changing data distributions. Additionally, researchers are exploring various methods for Model Monitoring and Model Validation to detect and address baseline drift. Furthermore, researchers are exploring the interplay between baseline drift and other machine learning concepts, such as Adversarial Attacks and Explainable AI.

📊 Conclusion and Recommendations

In conclusion, baseline drift is a critical issue in machine learning that can have significant consequences if left unaddressed. To mitigate these effects, it is essential to develop robust machine learning models that can adapt to changing data distributions. This can be achieved through the use of Model Updating, Model Maintenance, and Data Quality improvement. Additionally, researchers should explore various methods for Human-Machine Collaboration and Explainable AI to develop targeted strategies for mitigating baseline drift. By addressing these issues, we can develop more robust and reliable machine learning models that can be trusted to make critical decisions.

📈 Emerging Trends in Baseline Drift

Emerging trends in baseline drift research include the use of Deep Learning and Reinforcement Learning to develop robust machine learning models. Researchers are exploring various methods for Transfer Learning and Domain Adaptation to develop models that can learn from changing data distributions. Additionally, researchers are exploring various methods for Model Monitoring and Model Validation to detect and address baseline drift. Furthermore, researchers are exploring the interplay between baseline drift and other machine learning concepts, such as Adversarial Attacks and Explainable AI.

🤖 The Interplay between Baseline Drift and [[Adversarial Attacks|Adversarial Attacks]]

The interplay between baseline drift and Adversarial Attacks is a critical area of research. Baseline drift can exacerbate the effects of adversarial attacks, making it more challenging to develop robust machine learning models. Researchers are exploring various methods for Adversarial Training and Robust Optimization to develop models that can withstand adversarial attacks. Additionally, researchers are exploring various methods for Model Monitoring and Model Validation to detect and address baseline drift. By addressing these issues, we can develop more robust and reliable machine learning models that can be trusted to make critical decisions.

📊 The Connection between Baseline Drift and [[Explainable AI|Explainable AI]]

The connection between baseline drift and Explainable AI is a critical area of research. Baseline drift can make it challenging to develop explainable machine learning models, as changes in the data distribution can affect the model's performance and interpretability. Researchers are exploring various methods for Model Interpretability and Model Explainability to develop models that can provide insights into their decision-making processes. Additionally, researchers are exploring various methods for Model Monitoring and Model Validation to detect and address baseline drift. By addressing these issues, we can develop more transparent and trustworthy machine learning models.

Key Facts

- Year

- 2022

- Origin

- Machine Learning Research Community

- Category

- Artificial Intelligence

- Type

- Concept

Frequently Asked Questions

What is baseline drift?

Baseline drift refers to the phenomenon where the performance of a machine learning model degrades over time due to changes in the underlying data distribution. This can occur due to various factors, such as concept drift, data quality issues, or changes in the data distribution. To address this issue, it is essential to understand the causes of baseline drift and develop strategies for detecting and mitigating its effects. Researchers have proposed various methods for model updating and model maintenance to adapt to changing data distributions. For more information, see Concept Drift and Model Maintenance.

What are the causes of baseline drift?

Baseline drift can be caused by various factors, including changes in the data distribution, concept drift, and data quality issues. Changes in the data distribution can occur due to various factors, such as seasonal fluctuations, demographic changes, or changes in user behavior. Concept drift refers to the phenomenon where the underlying concept or relationship being modeled changes over time. Data quality issues, such as noise and outliers, can also contribute to baseline drift. To address these issues, it is essential to develop robust data preprocessing techniques, such as data cleaning and data transformation. For more information, see Data Quality and Data Preprocessing.

How can baseline drift be detected and measured?

Detecting and measuring baseline drift is crucial to developing effective strategies for mitigating its effects. Researchers have proposed various methods for detecting baseline drift, including statistical process control and change detection techniques. These methods can be used to monitor the performance of machine learning models over time and detect changes in the data distribution. Additionally, researchers have proposed various metrics for measuring baseline drift, such as the drift detection metric and the performance degradation metric. These metrics can be used to evaluate the severity of baseline drift and develop targeted strategies for mitigation. For more information, see Change Detection and Model Monitoring.

What are the strategies for mitigating baseline drift?

Strategies for mitigating baseline drift include model updating, model maintenance, and data quality improvement. Model updating involves retraining the model on new data to adapt to changing data distributions. Model maintenance involves regular monitoring and validation of the model to detect and address baseline drift. Data quality improvement involves developing robust data preprocessing techniques to reduce the impact of noise and outliers. Additionally, researchers have proposed various methods for ensemble learning and stacking to combine multiple models and improve overall performance. For more information, see Model Updating and Ensemble Learning.

What are the real-world examples of baseline drift?

Real-world examples of baseline drift can be seen in various applications, such as credit risk assessment, medical diagnosis, and recommendation systems. In credit risk assessment, baseline drift can occur due to changes in the economy, demographic changes, or changes in lending practices. In medical diagnosis, baseline drift can occur due to changes in disease patterns, advances in medical technology, or changes in patient behavior. In recommendation systems, baseline drift can occur due to changes in user behavior, seasonal fluctuations, or changes in product offerings. To address these issues, it is essential to develop robust machine learning models that can adapt to changing data distributions. For more information, see Credit Risk Assessment and Recommendation Systems.

What is the role of human judgment in baseline drift?

Human judgment plays a critical role in baseline drift, as it can help detect and address changes in the data distribution. Human evaluators can monitor the performance of machine learning models and detect changes in the data distribution. Additionally, human judgment can be used to develop targeted strategies for mitigating baseline drift, such as model updating and model maintenance. However, human judgment can also introduce biases and errors, which can exacerbate baseline drift. To address these issues, it is essential to develop robust methods for human-machine collaboration and explainable AI. For more information, see Human-Machine Collaboration and Explainable AI.

What are the future directions for baseline drift research?

Future directions for baseline drift research include the development of robust machine learning models that can adapt to changing data distributions. Researchers are exploring various methods for transfer learning, domain adaptation, and online learning to develop models that can learn from changing data distributions. Additionally, researchers are exploring various methods for model monitoring and model validation to detect and address baseline drift. Furthermore, researchers are exploring the interplay between baseline drift and other machine learning concepts, such as adversarial attacks and explainable AI. For more information, see Transfer Learning and Domain Adaptation.